Architecture

Secure, Scalable Pull-Based Architecture

Overview

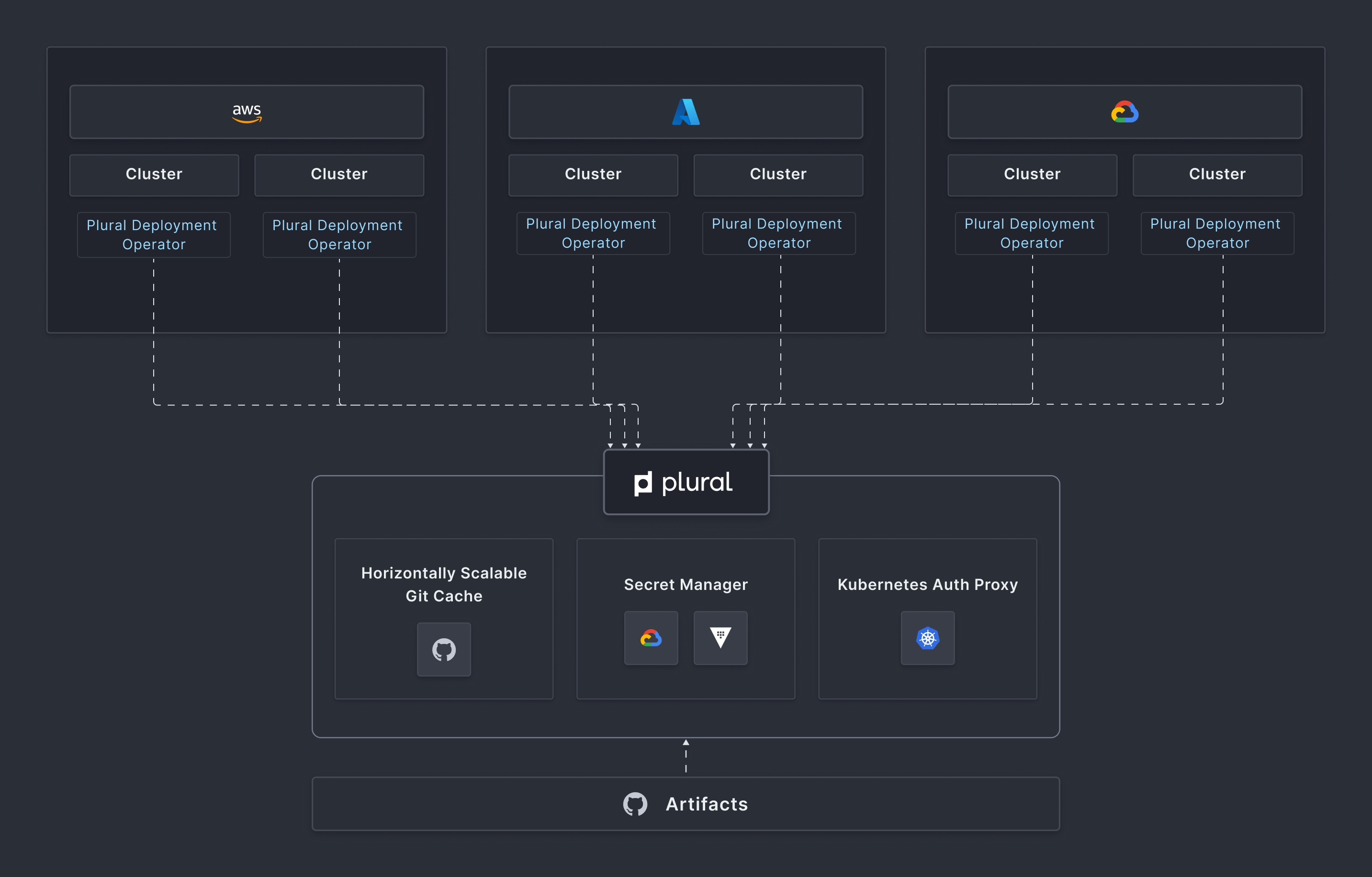

Plural is based on a scalable, secure, agent-based pull architecture. It doesn't require direct access to any of the clusters it deploys to, meaning it can manage workloads in any cloud, on-prem, on the edge, or even on a local laptop running KIND. Further since it doesn't require networking intensive kubernetes watch streams, central access to kubernetes, or rely on single-mastered operator control loops, it should scale to virtually any size kubernetes fleet. We also enhance your kubernetes setup with an auth proxy, allowing you to have full visibility to your workloads without compromising the network security of your setup or require the creation of complex multi-cloud networking setups.

Here's a quick diagram of the setup:

Control Plane

The Control Plane layer is a full-stack service deployable onto any kubernetes cluster you designate as your management cluster. It contains a few main components:

- Horizontally scalable git cache - we should be able to ingest as many git repos as you'd like and auto-shard them throughout your cluster automatically and efficiently.

- Configuration Management - supports re-configurable backends, but allows you to easily parameterize services with information like hostnames, docker image tags, and other secret and non-secret information.

- Auth Proxy - this is a secure bidirectional grpc channel initiated by a deployment agent used to make kubernetes api calls no matter where a cluster may live and give you full dashboarding capabilities from the Plural UI.

- Cluster API Providers - Plural natively integrates with cluster api and allows you to create and manage new clusters at scale and fork your own kubernetes cluster APIs on top of existing setups for services like EKS, AKS and GKE or on-prem solutions like Rancher

We provide simple installers if you'd like to deploy the control plane layer to a kubernetes cluster already in your fleet, or you can use our own kubernetes setup in the standard plural install flow.

Deployment Agent

A thin deployment agent is installed onto each cluster and perpetually managed by Plural from then on. It will perpetually poll the control plane for new services to apply and if there are any changes to make, apply them into your cluster. It also can do a few other things like: